|

Getting your Trinity Audio player ready...

|

By now we have all heard the term “Artificial Intelligence” (AI) with the mysterious technology representing the next cutting edge “mega trend” in computing. AI can be thought of as the simulation of human intelligence by computer systems with the most rapid advancements currently emerging in “machine learning”. Machine learning is the core building block of AI and can be thought of as an algorithm designed to train a computer to learn from data and act on it, without being explicitly programmed to do so. While purists will distinguish between “machine learning”, “deep learning”, “neural networks”, etc. for our purposes the differences are largely semantic.

Let’s start by considering “natural intelligence” or the way people learn, this involves the collection of data from our surroundings over many years, using our senses namely eyes, ears, touch, feedback with trial and error, etc. A result of these efforts results in our ability to walk, talk, read, write and interact with our surroundings and other people. In machine learning computer systems are fed massive quantities of information (“big data”), like medical records, credit card transactions, social media (tweets, posts, likes), etc. from which the system “learns”. The learning is either “supervised” whereby a human provides data where known correct input-and-output pairs exist (e.g. what a tumor looks like in a MRI). This is similar to rote learning for people (i.e. memorization) with most machine learning currently “supervised”.

The far more powerful form of machine learning however, takes an “unsupervised” form where the algorithm is trained on a set of data where there is no set of right answers. Instead, using the data the algorithm establishes a logic structure based on input-weightings deduced from a probability distribution of known outputs. In other words, if an input is known to produce a certain output more frequently, then that input will receive a higher weighting in the algorithm’s decision tree, with the decision tree getting more and more complex and accurate as more data is fed into it. For example, when Google shows you more relevant search results once it has “learnt” your preferences after you have been using it for a while.

Bringing this back to real life, perhaps the most famous example of AI and machine learning was IBM’s Deep Blue which defeated chess grand master and reigning world champion, Garry Kasparov in 1997. Since then IBM has lost its lead to Alphabet (Google) which considered to have the most sophisticated and advanced machine learning IP (intellectual property) on earth. This was demonstrated when Google’s DeepMind division cracked what was regarded as one of the most difficult problems in AI and well out of reach for the technology at the time, when it defeated a world champion Go player. Go is considered to be much more difficult game for a computer to win than other games such as chess, because of its much larger branching factor which makes it prohibitively difficult to use traditional AI methods (i.e. supervised learning). So, when in late 2015, AlphaGo (Google’s algorithm) became the first computer program to beat a 9-dan (highest ranking), professional Go player without handicaps, it sent shock waves through the industry and highlighted how far ahead Google was in in the space. In fact, AlphaGo was subsequently awarded an honorary 9-dan ranking for its achievement.

Other examples of machine learning in our everyday lives include:

- Search and Ad Placement: As briefly discussed above, Google uses machine learning capabilities in its search algorithm to improve results and targeted advertising. Originally however, Google used a set of predefined rules to generate responses to search queries, however since 2016 it has progressively introduced machine learning based algorithms to optimize search results (following its AlphaGo success). Where this ends up is an open question however longer term, search may evolve into a more natural language, question-and-answer system, with which you may be able to converse (i.e. like talking to a person and explored in the 2013 film, “Her” staring Joaquin Phoenix and Scarlett Johansson).

- Recommendation Engines: Shopping recommendations and follow-on products / services based on previously observed patterns, seen on Amazon, Facebook, Netflix, Spotify, etc. are all facilitated by machine learning architectures. Hence when we are searching for a new pair of jeans in Google, we might get a display ad that promotes Levi’s in the frame beside the search results.

- Fraud Detection: Machine learning is used to identify insurance claims that appear abnormal and also perform credit checks much for efficiently. Fintech start-up, ACORN OakNorth uses machine learning to assist loan arrangers in assessing credit risk and managing its loan book. CEO Rishi Khosla was recently quoted in the Financial Times as saying competitors without AI typically employ 10x as many staff in credit assessments as ACORN OakNorth does for a similar volume of loans.

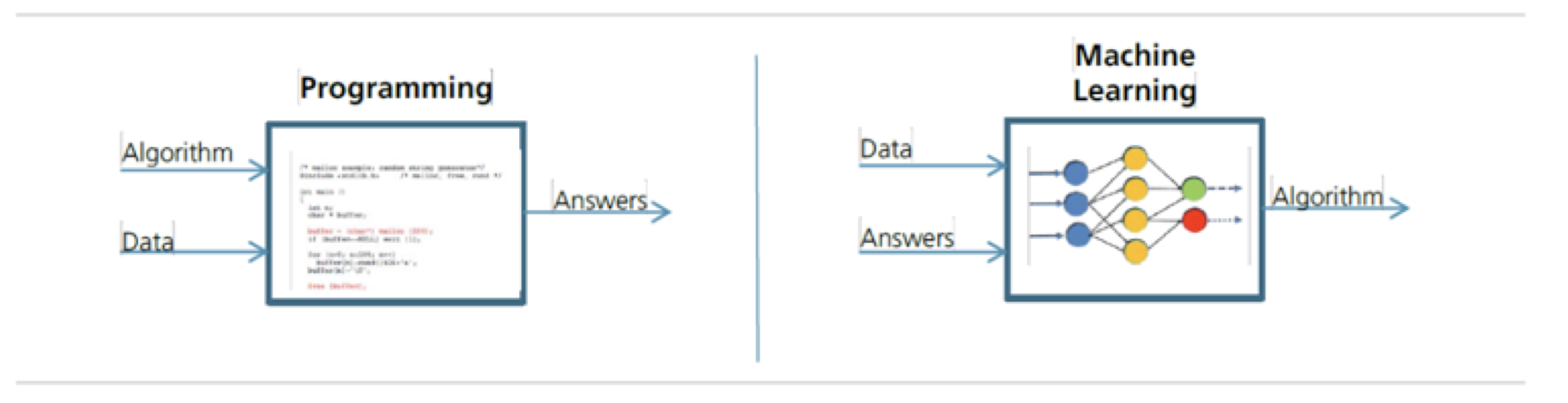

Our discussion wouldn’t be complete without exploring some of the concerns people have around the AI which generally centre on it growing into a self-aware, artificial super intelligence that will trigger unimaginable technological growth and result in the end of human civilization (i.e. the next phase of evolution where humans are no longer the dominant species). While this is a highly philosophical debate (which we will not have today) it is worth pointing out why some people fear this. Basically, machine learning is a new way to “program” a computer where the system takes in data and learns from it to reach decisions. This is different from traditional programming where a human codes in the logic which is then followed to output an answer. This can foster fear that we are not in control of how the algorithm is coming up with its answers which can be explained using a traditional logical construct that people are familiar with. This idea when extended can raise questions around what this ultimately means for the human race in some people’s minds. That said, most emergent technologies over the course of history were met with a degree of fear and scepticism, with online banking and shopping recent examples that were initially dismissed but ultimately become ubiquitous across the world.

Machine Learning versus Regular Programming

Source: UBS

While the impact of AI is still being understood, it is expected to significantly impact almost every sector on earth and as with the creation of any disruptive technology, first movers will generally reap disproportionate returns. Given the deep dependence AI and machine learning have on data and the formation of complex algorithms based on trillions of observations, first movers have a significant competitive advantage. As such “data owners” (social networks, insurance companies, etc.) and machine learning leaders (search, hyperscale cloud, etc.) are likely to see this first mover advantage become increasingly reinforced over time. Alphabet (Google) and Microsoft are global leaders in the AI and machine learning space in addition to being two of the highest quality businesses in the world, both of which Montaka Global owns in the portfolio.

Amit Nath is a Senior Research Analyst with Montaka Global Investments. To learn more about Montaka, please call +612 7202 0100.

Amit Nath is a Senior Research Analyst with Montaka Global Investments. To learn more about Montaka, please call +612 7202 0100.